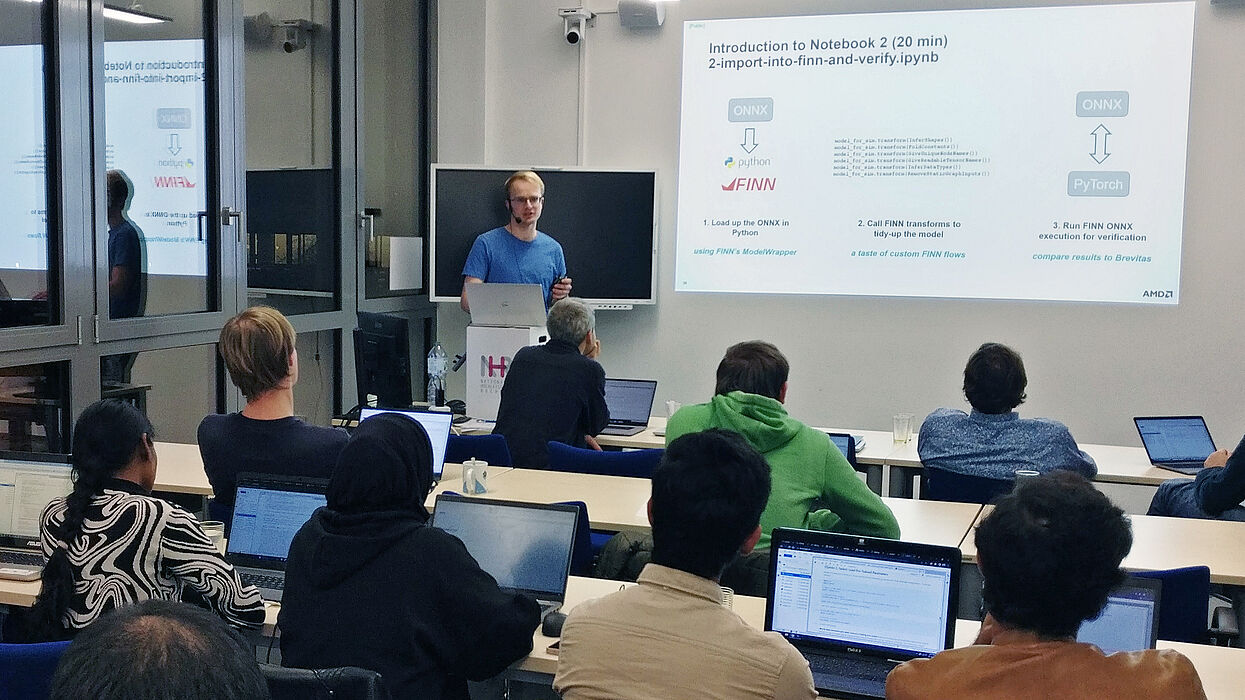

On the 12th December 2023, the first hands-on workshop to explore efficient neural network inference with FPGAs took place at the Paderborn Center for Parallel Computing (PC2). The workshop was organized and hosted by PC2 in cooperation with the Computer Engineering Group of Paderborn University, both partners within the eki project.

The eki project has started in January 2023, runs for a period of three years and is funded by the German Federal Ministry for the Environment, Nature Conservation, Nuclear Safety and Consumer Protection under the funding line “AI Lighthouses for Environment, Climate, Nature, and Resources”. The main goal of the eki project is to increase the energy efficiency of deep neural network (DNN) inference in AI systems through approximation techniques and mapping to high-end FPGA systems. Two methodological approaches are applied: First, deep neural network (DNN) models are compressed by network pruning, and second, the compressed DNNs are directly mapped into hardware using the open source framework FINN. FINN creates a specialized data flow architecture that maps a DNN layer-by-layer onto hardware, achieving very high throughput and very low latency. Fixed-point formats up to binary quantization can be chosen for the DNN parameters. Strong quantization reduces the hardware required and also the number of external memory accesses, which are a significant contributor to the energy budget. FINN uses Brevitas, a PyTorch library that supports training of quantized DNN models.

The half day workshop focused on two main objectives: understanding the concepts of FINN and using FINN in practical hands-on sessions on the FPGAs of Noctua 2. In order to explain the advanced concepts of FINN, two fundamental sessions on Field Programmable Gate Arrays (FPGAs) and Neural Networks were presented as part of the event.

Afterwards, the participants were able to train a neural network by themselves, as well as transform the trained network into an FPGA design using a guided JupyterHub hands-on tutorial notebook. The attendees were then able to execute this design on the AMD Xilinx Alveo U280 datacenter FPGA cards that are available as part of the Noctua 2 cluster and see the improved performance of the network that was trained before.

A second workshop in the next year will extend the topics covered in this year's hands-on workshop to also include the execution of a single neural network on multiple interconnected FPGAs.