On-the-Fly Machine Learning

Prof. Dr. Eyke Hüllermeier, Universität Paderborn

Most sub-fields of computer science deal with the creation of algorithms or parts thereof such as data structures. On an abstract level, an algorithm can be seen as a function mapping inputs to outputs. Originally and still today, many algorithms are carefully designed manuallyby hand in order to accomplish a certain task such as finding the shortest path from one point to another in a graph. This requires the designer to have both a strategy available for solving the task of interest and, equally importantly, as well as being able to describe formally how this strategy works. Unfortunately, there exist problems for which algorithm designers, or even humans in general, are unable to describe the strategy they use for solving it. For example, the task of discriminating pictures which show either a cat or a dog is not a too hard task for most people, or even children. But describing how this problem is solved is a completely different story. Hence, a formal description of the identification process is deemed impossible, and the classical manual algorithm design approach is unsuitable for such problems.

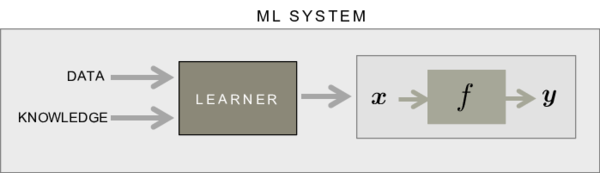

Machine learning can be viewed as an alternative approach to creating such a system which returns a desired output for a given input. Instead of manually specifying the behavior of the system, machine learning deals with inducing such a system, usually called model, from supplied data. In its most basic form, this data consists of example pairs of inputs and outputs of the system to be constructed.

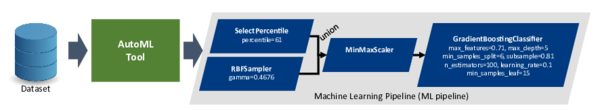

Due to the extensive digitalization of almost all areas of life (e.g., Industry 4.0, social media platforms, IoT,...) vast amounts of data are generated daily, and the trend is still rising. To make use of the information contained in such data, the latter has to be acquired, analyzed and processed for the task in question. In a subsequent step, a machine learning model has to be tailored to and trained using the preprocessed data. This results in a sequence of successively executed algorithms, also referred to as a machine learning pipeline. The execution of the latter is then usually followed by representation and/or interpretation of the associated results of the model.

Research in the area of data science has raised many different algorithms for each step of this pipeline, which in turn provideoffer degrees of freedom in the form of parameters whichthat enable allow fine-tuning to the respective data. Altogether, this results in a wide variety of possible candidates ( >>1050 [1, 2, 3]). Unfortunately, there is also no algorithm of choice for each step which is the best across all kinds of datasets but the right choice highly depends on the specifiedconcrete task and respective data.

As a consequence, for each new task, such a pipeline has to be designed from scratch to achieve satisfying performance, which requires expertise in data science.

However, most developers, let alone end users, in application domains are neither data scientists nor machine learning experts and the number of experts in this field is severelystrongly limited souch that the demand for the expertise cannot be completely satisfied nearly. Accordingly, there exists an urgent need for tools which are both easy to use and also provideoffer high quality ML solutions to end users without requiring constant interaction with an ML expert. Ideally, such a tool automates the whole process of creating an end-user ready for operation ML system starting from data preprocessing, the choice of a model class, the training and evaluation of a model and finally ending with the representation and interpretation of the results. From this demand and vision,T the research field of automated machine learning (AutoML) has emerged f.rom this demand and vision.

In the last couple of years, AutoML has established itself as a hot and influential topic. DespiteIn spite of the short history of AutoML, impressive results could be obtained by AutoML tools such as Auto- WEKA [1], auto-sklearn [2], and TPOT [4]. Quite recently, we have advanced the state of the art in AutoML by another tool called ML-Plan [3, 5, 6, 7] which performs competitive and, in several cases, is even superior to the already existing approaches. While the approaches differ significantly in the way that they search for the most appropriate candidate, these tools have one thing in common. During the search process, several potential solutions have to be trained and evaluated on the actual data, which is already computationally intensive for a single candidate and usually it is necessary to consider several of them. In fact, the training and evaluation of an ML pipeline can take up to several hours or even days.

Parallelization of the evaluation of ML pipelines cannot help to search the space of possible solutions exhaustively, since the complexity of the search space is far too high. Within a given timeframetime frame, however, parallelization has a positive effect on the quality of the returned solution, as multiple candidates can be considered simultaneouslyat the same time, normally several hundreds or even thousands of such pipelines need to be considered in a single run.

Accordingly, to advance research in this field, enormous amounts of computational resources are required as such tools need to be evaluated thoroughly on a broad spectrum of different datasets. These resources are gratefully provided, in our case, by the PC2, in our case. We intensively used the PC2 clusters for conducting experiments to investigate new methods and techniques in order to further improve the solution quality and efficiency of our AutoML tool ML-Plan. Due to the increasing amount of competitors, AutoML scenarios and benchmark datasets, we plan to ramp up our experiments to over 2000 CPU years in the upcoming months.

Moving AutoML to a cloud environment and providing machine learning functionality on-the-fly as a service, it becomes an application domain of “On-The-Fly Computing” which we refer to as “On-The-Fly Machine Learning” (OTF-ML) [8, 9]. The homonymous collaborative research center (CRC) 901 “On-The-Fly Computing” deals with the automatic on-the-fly configuration and provision of individual IT services out of base services whichthat are available on world-wide markets. ML-Plan has been developed within scope of the CRC 901 at Paderborn University and constitutes the core of our vision forof OTF-ML. On the one hand, with OTF-ML, we want t to enable end users who do not necessarily have sufficient hardware resources to operaterun AutoML on their own devices in order to use machine learning functionality tailored to their needs. AlternativelyOn the other hand in a cloud environment, there are more degrees of freedom to scale, for example by running more candidate evaluations in parallel, by distributing the entire search process or by sharing resources between similar requests.

PC2 forms the foundation for all additionalfurther work in this direction.

References

[1] Chris Thornton, Frank Hutter, Holger H. Hoos, and Kevin Leyton-Brown. Auto-WEKA: com- bined selection and hyperparameter optimization of classification algorithms. In The 19th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, KDD 2013, Chicago, IL, USA, Ppages 847–855, 2013.

[2] Matthias Feurer, Aaron Klein, Katharina Eggensperger, Jost Springenberg, Manuel Blum, and Frank Hutter. Efficient and robust automated machine learning. In Advances in Neural Infor- mation Processing Systems, Ppages 2962–2970, 2015.

[3] Felix Mohr, Marcel Wever, and Eyke Hüllermeier. Ml-plan: Automated machine learning via hierarchical planning. Machine Learning, 107(8-10):1495–1515, 2018.

[4] Randal S Olson and Jason H Moore. Tpot: A tree-based pipeline optimization tool for au- tomating machine learning. In Workshop on Automatic Machine Learning, pages 66–74, 2016.

[5] Marcel Wever, Felix Mohr, and Eyke Hüllermeier. Ml-plan for unlimited-length machine learning pipelines. In AutoML Workshop at ICML, 2018.

[6] Marcel Wever, Felix Mohr, and Eyke Hüllermeier. Automated multi-label classification based on ml-plan. CoRR, Paragraphabs/1811.04060, 2018.

[7] Marcel Wever, Felix Mohr, Alexander Tornede, and Eyke Hüllermeier. Automating multi-label classification extending ml-plan. In AutoML Workshop at ICML, 2019.

[8] Felix Mohr, Marcel Wever, and Eyke Hüllermeier. Automated machine learning service compo- sition. CoRR, Paragraphabs/1809.00486, 2018.

[9] Felix Mohr, Marcel Wever, Eyke Hüllermeier, and Amin Faez. (WIP) towards the automated composition of machine learning services. In 2018 IEEE International Conference on Services Computing, SCC 2018, San Francisco, CA, USA, July 2-7, 2018, Ppages 241–244, 2018.